You just wrapped up what felt like a phenomenal interview. The candidate had all the right answers, their resume sparkled with impressive credentials, and they even made you laugh twice. You extend the offer, convinced you've found your next star performer.

Three months later, you're staring at mediocre work, missed deadlines, and wondering what happened. The person who interviewed brilliantly can't actually do the job.

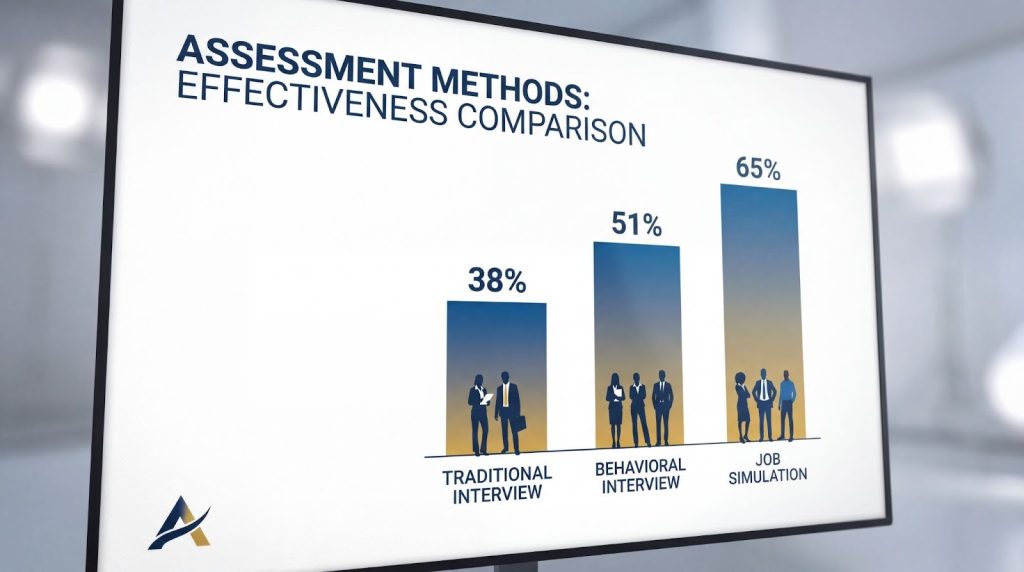

Sound familiar? You're not alone. Traditional interviews have a predictive validity of just 38%, meaning they're barely better than flipping a coin. But there's a better way. Job simulation interviews replicate actual work tasks to assess real performance, not just interview performance. According to Schmidt-Hunter's landmark meta-analysis of 100 years of hiring research, combining structured interviews with work simulations achieves 51-65% predictive validity—making them one of the most accurate hiring tools available.

In this guide, you'll discover exactly how job simulation interviews work, see 10 real-world examples across industries from healthcare to hospitality, and get a practical implementation framework you can use immediately. Whether you're hiring your first virtual assistant or building an entire recruitment engine, these methods will help you predict job success with remarkable accuracy.

Key Takeaways

– Job simulation interviews achieve 51% higher predictive validity than resume screening by testing actual job skills rather than hypothetical scenarios

– 76% of employers now use skills-based tests in 2025, up from 68% in 2024, making simulations a mainstream hiring standard

– Real-world examples span 7 industries—from healthcare HIPAA scenarios to hospitality guest recovery exercises adaptable to any sector

– Effective simulations combine cognitive assessments, work samples, and structured evaluation using Schmidt-Hunter validated methodology

– Implementation requires 15-20 minute realistic exercises with objective scoring rubrics calibrated through pilot testing with current employees

– Post-simulation insights directly inform personalized onboarding and coaching plans, improving retention by up to 40%

What Are Job Simulation Interviews?

Definition and Core Concept

A job simulation interview is a pre-employment assessment that replicates real job tasks to evaluate candidate performance in realistic scenarios. Unlike traditional behavioral interviews that ask hypothetical questions like "Tell me about a time when...", job simulations require candidates to demonstrate skills through practical exercises, achieving significantly higher predictive validity.

Think of it this way: If you're hiring a pianist, would you rather hear them describe how they play Chopin, or actually listen to them perform? Job simulations are the "live performance" of hiring. They test what candidates can actually do, not just what they claim they can do.

The shift from asking to observing represents a fundamental change in how progressive organizations approach talent evaluation. Instead of relying on candidates' storytelling abilities or their skill at rehearsing interview answers, you get to watch them work in conditions that mirror the actual job.

The Three Components of Effective Job Simulations

Every successful job simulation interview includes three essential elements working in harmony.

First, you need realistic scenario design. The tasks must mirror actual daily responsibilities that matter for job success. If your property management coordinator spends 30% of their time coordinating maintenance requests, that should be reflected in your simulation. Generic scenarios that could apply to any role won't give you meaningful insights.

Second, establish objective evaluation criteria. This means creating standardized scoring rubrics, typically using 1-5 scales across multiple dimensions. For example, a customer service simulation might evaluate communication clarity, problem-solving approach, empathy demonstrated, and policy adherence. The rubric defines what "excellent" versus "poor" looks like for each criterion with specific observable behaviors.

Third, design for time-bound execution. Most effective simulations run 15-20 minutes. This window balances thoroughness with efficiency, giving you enough time to observe meaningful performance patterns without exhausting candidates or consuming excessive hiring resources. Longer simulations show diminishing returns after about 25 minutes.

When these three components align—realistic tasks, objective measurement, and appropriate duration—you create an assessment that predicts job performance with remarkable accuracy.

Job Tryout vs. Simulation: Understanding the Difference

Here's a distinction worth understanding: job tryouts and job simulations aren't quite the same thing, though people often use the terms interchangeably.

A job tryout typically means a candidate performs actual work for days or weeks, sometimes as a paid trial or contractor arrangement. They're literally doing the job to see if it fits. This approach works beautifully for startups, creative roles, or specialized positions where the real work environment heavily influences success.

Job simulations, on the other hand, condense the assessment into minutes or hours. They're designed replicas of key tasks, not the full job experience. Think of medical simulation labs where doctors practice procedures, or flight simulators where pilots train. The simulation captures the essential elements without requiring weeks of real-world trial.

Simulations are far more scalable for high-volume hiring. If you're evaluating twenty customer service candidates, you can't have all of them work for two weeks. But you can run all twenty through a standardized 20-minute call handling simulation in a few days.

The choice between tryouts and simulations depends on your hiring context. For most operational roles—executive assistants, coordinators, customer service, administrative support—simulations provide the sweet spot of predictive power and practical efficiency.

The Science Behind Job Simulations: Why They Work

Schmidt-Hunter Predictive Validity Research

If you want to understand why job simulations outperform traditional interviews, you need to know about Frank Schmidt and John Hunter's groundbreaking research. Over several decades, they conducted meta-analyses covering 100 years of personnel selection studies, examining what actually predicts job performance.

Their findings revolutionized hiring science. Unstructured interviews—the "let's just chat and see if I like them" approach—predicted job success only 38% of the time. Structured interviews, where every candidate answers the same questions evaluated against consistent criteria, jumped to 51% predictive validity.

But here's where it gets interesting. When organizations combined structured interviews with cognitive ability tests and work sample assessments, predictive validity climbed to 65%. That's nearly double the accuracy of traditional interviewing.

This isn't theoretical. The research examined real hiring outcomes across industries, job types, and decades of data. Work samples and simulations consistently emerged as among the most powerful predictors of who would actually succeed in the role.

The implication is clear: if you want to hire better, you need to watch people do the work, not just hear them talk about the work.

Why Skills-Based Simulations Outperform Resume Screening

Resume screening faces an uncomfortable truth: candidates routinely overstate their capabilities. Not necessarily through outright lying, but through inflation, selective presentation, and strategic framing. Someone lists "advanced Excel skills" when they've only used basic formulas. Another claims "project management experience" for work that involved attending meetings.

By 2025, 85% of employers have adopted skills-based hiring practices, up from 81% just one year earlier. This rapid growth reflects a collective realization that credentials and claimed experience don't reliably predict performance.

Job simulations cut through the noise. When you ask a bookkeeper candidate to actually process ten transactions in QuickBooks, you discover their real proficiency level within minutes. When you watch an executive assistant prioritize a chaotic inbox, you see how they think under pressure. The gap between claimed skills and demonstrated skills becomes immediately visible.

Simulations also reveal performance under realistic conditions. Interview answers happen in calm, controlled settings where candidates have time to think and craft responses. Work simulations introduce the actual pressures of the job—time constraints, incomplete information, competing priorities, system limitations. You see how candidates perform when it actually counts.

This authentic assessment dramatically reduces hiring mistakes. You're not betting on someone's interview polish or resume formatting. You're making decisions based on observed capability.

How Job Simulations Reduce Hiring Bias

Traditional hiring is riddled with unconscious bias. Interviewers make snap judgments based on where someone went to school, gaps in their resume, their accent, their age, or whether they remind the interviewer of someone they know. These subjective assessments have nothing to do with job performance, yet they influence hiring decisions constantly.

Job simulations shift the focus from subjective judgments to objective performance. When you evaluate a candidate based on how well they handled a customer complaint scenario using a standardized rubric, bias has far less room to operate. The scoring criteria are predefined. The task is identical for every candidate. The evaluation focuses on observable behaviors, not gut feelings about "culture fit."

This approach levels the playing field for non-traditional candidates. Someone who changed careers at 40, attended a community college instead of an Ivy League school, or took time off for caregiving can demonstrate their actual capabilities. Their performance on the simulation matters, not their pedigree.

Standardized rubrics and multiple evaluators further reduce bias. When two or three people independently score the same simulation and compare notes, individual biases tend to cancel out. Patterns in the data become clear, while idiosyncratic preferences fade into background noise.

For organizations serious about diversity, equity, and inclusion, job simulations aren't just nice to have—they're essential infrastructure for merit-based hiring.

10 Real-World Job Simulation Examples

Let's move from theory to practice. Here are ten actual job simulations used by high-performing recruitment teams across industries. Each example includes the scenario design, evaluation criteria, and real outcomes where available.

Example 1: Executive Assistant – Inbox Management Simulation

Industry: Tech Startups

The Scenario: Candidates receive access to a mock email inbox containing 25 messages with competing priorities. There are scheduled meeting conflicts, an urgent request from the CEO, vendor communications requiring follow-up, calendar coordination needs, and several low-priority items creating noise. They have 20 minutes to prioritize, respond to key messages, delegate appropriately, and explain their decision-making process.

Evaluation Criteria: Assessors score candidates on prioritization logic (did they identify the truly urgent items?), communication clarity in their responses, calendar optimization skills, and crisis triage ability. The rubric specifically looks for whether candidates ask clarifying questions before acting, how they handle conflicting priorities, and whether their tone remains professional under pressure.

Real Outcome: Tech startups using this simulation report that candidates who score in the top quartile consistently receive higher performance ratings after 90 days compared to those hired through traditional interviews alone.

Example 2: Learning & Development Specialist – E-Learning Module Creation

Industry: Professional Services

The Scenario: Candidates receive a specific learning objective and access to a simplified e-learning authoring tool. Their task: create a 10-minute training module on the assigned topic within 45 minutes. The module must include clear learning objectives, engaging content structure, at least one interactive element, and a brief assessment.

Evaluation Criteria: Evaluators assess instructional design quality (are concepts sequenced logically?), visual design decisions, learner engagement tactics, and whether the assessment actually measures the stated learning objectives.

Real Outcome: One recruitment team used this exact simulation to hire Julia, a Learning & Development Specialist for a professional services firm. Her simulation module demonstrated exceptional instructional clarity and engagement design. After hiring, Julia's training programs achieved a 79% completion rate—significantly above the industry average of 20-30% for online training.

Example 3: Property Management Coordinator – Vendor Coordination Exercise

Industry: Real Estate/Property Management

The Scenario: A mock maintenance request comes in from a resident. The candidate must coordinate with three different vendors (accessed through chat/email in the simulation), communicate professionally with the resident about timeline expectations, update the work order system correctly, and ensure proper follow-up protocols are documented. Time limit: 20 minutes.

Evaluation Criteria: Assessors evaluate communication professionalism (tone, clarity, empathy), system navigation competency, problem-solving approach when vendors provide conflicting information, and thoroughness of documentation.

Real Outcome: Property management companies using vendor coordination simulations consistently identify candidates who reduce resident complaint rates and improve vendor response times. One coordinator hired through this process increased repeat resident bookings by 20% within six months through exceptional communication and follow-through.

Example 4: Healthcare Scheduling Coordinator – HIPAA Compliance Scenario

Industry: Healthcare

The Scenario: In this role-play simulation, a "patient" (played by an assessor or via recorded scenario) calls requesting copies of their medical records. The candidate must verify the caller's identity using proper HIPAA protocols, process the request correctly, document the interaction appropriately, and handle a secondary scenario where someone asks for records they're not authorized to access.

Evaluation Criteria: Compliance adherence is paramount—did the candidate follow every required verification step? Additional criteria include empathy in communication (balancing security with compassion), documentation accuracy, and security awareness when handling the non-compliant request.

Real Outcome: Healthcare practices using this simulation dramatically reduce compliance violations. Candidates who can't navigate HIPAA requirements in the simulation are screened out before they can make costly real-world mistakes.

Example 5: Customer Service Representative – Difficult Call Role-Play

Industry: Hospitality/Service

The Scenario: Candidates participate in a live role-play with a trained assessor playing an angry customer whose hotel room had maintenance issues. The customer is frustrated, threatens to leave a negative review, and demands compensation beyond standard policy. Candidates must de-escalate, show genuine empathy, offer a solution within company guidelines, and document the interaction. The scenario lasts 10-12 minutes.

Evaluation Criteria: Emotional intelligence receives heavy weight—can the candidate remain calm and empathetic while being criticized? Additional criteria include problem resolution creativity, brand voice consistency, and whether recovery offers balance customer satisfaction with company policy.

Real Outcome: Hospitality companies rooted in service excellence culture use these simulations to identify candidates with natural de-escalation skills. Those who score highest on emotional intelligence criteria consistently receive better guest satisfaction scores and recover more situations before they escalate to management.

Example 6: Sales Development Representative – Cold Outreach Simulation

Industry: SaaS/Tech

The Scenario: Candidates receive a target prospect company name and LinkedIn profile. They have 15 minutes to research the company, identify a relevant pain point, craft a personalized cold outreach email, and prepare a brief talk track. Then they participate in a mock cold call where an assessor plays the prospect and delivers scripted objections.

Evaluation Criteria: Assessors evaluate research thoroughness (did they find relevant company information?), personalization quality in the email (generic template or genuinely customized?), objection handling skills, and persistence without pushiness during the call.

Real Outcome: SaaS companies hiring SDRs through this process identify candidates who ramp faster to quota attainment. The simulation reveals whether someone can handle rejection professionally and pivot their approach—skills impossible to assess in traditional interviews.

Example 7: Social Media Manager – Content Creation Work Sample

Industry: Creative Agencies

The Scenario: Candidates receive brand guidelines and a campaign brief for a fictional (or real anonymized) client. They must create three social media posts optimized for different platforms—LinkedIn, Instagram, and Twitter—complete with copy and visual direction. Time limit: 30 minutes. They present their work and explain their strategic choices.

Evaluation Criteria: Brand voice alignment is critical—do the posts sound like this brand? Additional criteria include platform-specific optimization (not just copying the same message across channels), engagement potential based on copywriting quality, and visual creativity in the direction provided.

Real Outcome: Creative agencies report that this simulation identifies candidates who understand platform nuances far better than portfolio reviews alone. Someone might have a beautiful portfolio from past work, but the simulation reveals whether they can adapt to a new brand voice quickly.

Example 8: Bookkeeper – QuickBooks Transaction Processing

Industry: Professional Services

The Scenario: Candidates work in a test QuickBooks environment with access to a mock company file. They must process ten transactions with varying complexity: vendor bill payments, invoice categorization, expense reconciliation, and one transaction with intentional ambiguity requiring them to ask a clarifying question. Time limit: 25 minutes.

Evaluation Criteria: Accuracy is weighted heavily—did they categorize transactions correctly? Additional criteria include speed and efficiency, software proficiency (navigating without constant help), and attention to detail during reconciliation checks.

Real Outcome: Accounting firms using this simulation eliminate candidates who claim "advanced QuickBooks skills" on their resume but struggle with basic workflows. The simulation also identifies candidates who ask clarifying questions before processing ambiguous transactions—a crucial skill for real accounting work.

Example 9: Operations Coordinator – Project Management Ambiguity Test

Industry: Startups

The Scenario: Candidates receive a deliberately vague project brief with conflicting stakeholder priorities and unclear deadlines. Their task: Ask clarifying questions, document assumptions they're making, identify potential risks, create a preliminary action plan, and explain their approach. This is presented as a working session with an assessor playing the "project sponsor." Duration: 20 minutes.

Evaluation Criteria: The quality and thoroughness of clarifying questions receive the highest weight—did they dig deeper before diving into solutions? Additional criteria include how well they documented assumptions, risk identification ability, and whether their action plan shows structured thinking.

Real Outcome: Startups thrive or die based on how well team members handle ambiguity. This simulation predicts which candidates will proactively seek clarity rather than making costly assumptions. Those who score well consistently become the coordinators who "just figure things out" without constant hand-holding.

Example 10: Virtual Assistant – Multi-Task Stress Test

Industry: Cross-Functional

The Scenario: This simulation tests multitasking and prioritization under pressure. Candidates must simultaneously handle four mini-tasks: schedule a meeting between three people with calendar conflicts, research vendor options for an upcoming purchase, draft a professional email response, and take a brief mock phone call. Tasks arrive with staggered timing and competing urgency. Time limit: 15 minutes.

Evaluation Criteria: Task completion rate matters, but not at the expense of quality. Assessors evaluate whether quality maintained across tasks, how well the candidate managed stress (observable through tone and composure), and the logic behind their prioritization decisions.

Real Outcome: Companies hiring virtual assistants use this simulation to identify candidates who can truly multitask effectively—a critical but hard-to-assess skill. Those who score well maintain high performance even when managing executives with demanding, fast-paced needs.

These ten examples demonstrate the versatility of job simulations across industries and role types. The common thread: each simulation replicates actual daily responsibilities while maintaining objective evaluation standards. Whether you're hiring for healthcare, hospitality, or tech, the principle remains the same—watch candidates do the work.

Job Simulations vs. Traditional Interviews: A Data-Driven Comparison

Understanding when to use each assessment method helps you build a comprehensive hiring process. Here's how the three main interview approaches stack up against each other:

When to Use Each Method

| Criteria | Traditional Interview | Behavioral Interview | Job Simulation |

| Predictive Validity | 38% (unstructured) | 51% (structured) | 51-65% (combined with cognitive tests) |

| Bias Risk | High (subjective judgments) | Moderate (still relies on storytelling) | Low (objective performance) |

| Assessment Focus | Past experience claims | Behavioral examples (STAR method) | Actual skill demonstration |

| Candidate Prep Impact | High (can rehearse answers) | Moderate (can practice frameworks) | Low (performance-based) |

| Time Investment | 30-60 min per candidate | 45-60 min per candidate | 15-30 min assessment + evaluation |

| Scalability | High (easy to conduct) | High (structured questions) | Moderate (requires design upfront) |

| Insight Quality | Surface-level | Moderate depth | Deep (observable performance) |

The most effective hiring processes don't pick one method and ignore the others. Instead, they strategically combine approaches based on what each assesses best.

Use traditional conversational interviews for culture fit baseline and communication style assessment. These informal conversations help you understand whether someone will mesh with your team's working style and values. They're also useful for explaining the role, answering candidate questions, and building relationship rapport.

Deploy behavioral interviews for leadership roles, complex problem-solving positions, and roles where past experience provides genuine predictive value. The STAR method (Situation, Task, Action, Result) helps candidates structure their examples, and you gain insight into how they've handled similar situations before.

Implement job simulations for roles with clearly defined, observable tasks. This includes most operational positions—coordinators, assistants, customer service, technical roles, creative positions. Anywhere you can realistically replicate key job tasks in 15-30 minutes, simulations provide the highest predictive accuracy.

The best practice combines all three methods into a comprehensive assessment. Use traditional interviews for initial screening and culture alignment, behavioral interviews for situational judgment and problem-solving approaches, and job simulations for skills validation. This integrated approach achieves the highest predictive validity—the 51-65% range that dramatically improves hiring quality.

How to Implement Job Simulations in Your Hiring Process

Ready to build job simulations for your organization? Follow this seven-step framework that high-performing recruitment teams use to design, validate, and continuously improve their simulation process.

Step 1: Conduct Job Task Analysis

Start by interviewing your top performers in the role you're hiring for. Ask them to walk through a typical workday and identify the 5-7 tasks that most impact their success. Look for activities that consume significant time and directly influence key outcomes.

Prioritize tasks based on both frequency and business impact. A task performed daily matters more than a quarterly activity. Something that directly affects customer satisfaction or revenue deserves more weight than purely administrative work.

Document not just what tasks happen, but the complexity and conditions surrounding them. Does the task require judgment calls? Does it happen under time pressure? Are there common obstacles or constraints? These contextual details inform your scenario design.

Finally, identify which tasks can be realistically simulated in 15-20 minutes. Some job responsibilities are too complex or require too much context to assess in a brief simulation. Focus on the high-impact tasks that can be condensed into an effective assessment window.

Step 2: Design Realistic Scenarios

Your simulation scenario must mirror actual work conditions as closely as possible. If your customer service reps typically handle difficult customers who are already frustrated when they call, your simulation should reflect that reality—not an idealized version where everyone is calm and reasonable.

Ensure scenarios have clear success criteria, but avoid making them so prescriptive that there's only one correct approach. The best simulations allow for different valid strategies, revealing candidates' problem-solving styles and priorities.

Include realistic constraints that exist in the actual job. If your coordinators usually work with incomplete information and tight deadlines, build that into the simulation. If your assistants must navigate multiple software systems simultaneously, incorporate that complexity.

One caution: avoid "gotcha" scenarios designed to trick candidates. Your goal is to assess capability, not to see who can spot a trap. Challenging scenarios are fine. Unfair scenarios that test for things unrelated to job success are not.

Step 3: Develop Objective Scoring Rubrics

Create standardized scoring rubrics using 1-5 rating scales for each evaluation dimension. Most effective simulations assess 4-6 separate criteria, each with its own rubric.

For each criterion, define what excellent performance looks like with specific observable behaviors. For example, "Communication Clarity" might define a 5 as "Candidate used plain language, confirmed understanding, and adapted communication style based on audience needs" while a 2 might be "Candidate used jargon without explanation and didn't check for understanding."

Be as specific as possible. Vague criteria like "showed good judgment" leave too much room for subjective interpretation. Behavioral anchors like "asked clarifying questions before proceeding" or "prioritized urgent customer request over routine administrative task" provide consistency.

Ensure your criteria actually align with what drives success in the role. If communication style doesn't impact job performance, don't include it in your rubric. Every evaluation dimension should connect directly to outcomes that matter.

Step 4: Train Assessors for Consistency

Even the best rubric won't help if evaluators interpret it differently. Calibrate your assessors by having them independently score the same sample candidate responses, then compare their ratings.

During calibration sessions, discuss any rating discrepancies. Often, evaluators notice different aspects of performance or weight criteria differently. These discussions help align interpretation and improve inter-rater reliability.

Document common edge cases and evaluation pitfalls. What should assessors do if a candidate asks for information not provided in the scenario? How should they handle technology issues during the simulation? Clear protocols prevent inconsistency.

Establish bias-check protocols where assessors consciously review their scoring for patterns. If someone consistently rates candidates from certain demographics lower, that's a red flag requiring intervention.

Step 5: Pilot Test with Current Employees

Before using a simulation with actual candidates, run it with 5-7 current employees who hold the same role. Include both high performers and average performers in your pilot group.

The simulation should distinguish between performance levels. If your top performer scores the same as your weakest team member, something is wrong with your scenario design or rubric. Adjust difficulty or criteria until the simulation reliably differentiates performance levels.

Target an average score of 60-70% when testing with current employees. If everyone scores above 90%, your simulation is too easy and won't help you distinguish strong candidates from weak ones. If everyone scores below 50%, it's unrealistically difficult.

Gather feedback from pilot participants about clarity and realism. Did the instructions make sense? Did the scenario feel like actual work they do? Were time limits appropriate? Their insights help you refine before you use the simulation in real hiring.

Step 6: Integrate with Structured Interviews

Job simulations deliver maximum value when combined with other assessment methods. Use simulation results to inform targeted follow-up questions during structured interviews.

For example, if a candidate struggled with prioritization during an inbox management simulation, you might ask behavioral questions about how they typically handle competing priorities. If they excelled at creative problem-solving, dig deeper into where they learned that skill and how they've applied it in past roles.

This integration achieves the predictive validity sweet spot. Simulations show you what candidates can do. Structured interviews reveal their judgment, learning ability, and self-awareness about their performance. Cognitive assessments measure their ability to learn and adapt. Together, these methods predict success far better than any single approach.

The combined methodology approach—cognitive assessments plus simulations plus structured interviews—represents the research-backed framework used by organizations serious about hiring accuracy.

Step 7: Analyze Results and Continuously Refine

Track correlation between simulation scores and actual job performance after hiring. Do candidates who score in the top quartile on your simulation become top performers six months later? If not, your simulation may be testing the wrong skills or your rubric needs recalibration.

Gather feedback from hiring managers about new hire performance. Which aspects of the job are new hires struggling with? If there's a consistent gap the simulation didn't catch, you may need to add a new evaluation dimension or redesign part of the scenario.

Update simulations annually to reflect role evolution. As jobs change—new tools, different processes, shifting priorities—your simulations must keep pace. A simulation designed three years ago may no longer assess the most critical current responsibilities.

Document everything you learn in a continuous improvement log. Over time, these insights compound into a recruitment process that gets progressively more accurate with each hiring cycle.

Common Implementation Mistakes to Avoid

Even with a solid framework, certain pitfalls trip up teams implementing simulations for the first time.

Don't make simulations too long. The temptation is to test everything, but assessment fatigue sets in quickly. Beyond 25 minutes, you get diminishing returns and start measuring stamina more than skill. Keep it focused.

Avoid subjective evaluation criteria that reintroduce the bias you're trying to eliminate. Phrases like "cultural fit" or "likability" in your rubric defeat the purpose. Stick to observable behaviors tied to job outcomes.

Watch for scenarios that don't reflect actual job realities. If you design simulations in a conference room without consulting people who actually do the work, you'll likely miss crucial real-world constraints or complexities.

Finally, don't skip assessor training. A brilliant simulation becomes worthless if five different evaluators interpret the rubric five different ways. Consistency matters enormously.

From Simulation to Success: Post-Hire Development

Here's where most organizations miss a critical opportunity. The insights you gain from job simulations shouldn't disappear once you make the hire. They should directly inform how you onboard and develop new team members.

Using Simulation Insights for Personalized Onboarding

Your simulation results reveal specific development areas for each new hire. Perhaps someone demonstrated strong strategic thinking during your operations coordinator simulation but showed weaker organizational structure. Maybe a candidate excelled at empathy during your customer service scenario but needs development on your specific product knowledge.

These insights allow you to create customized 30-60-90 day plans that address the exact gaps identified during assessment. Instead of generic onboarding that covers everything for everyone, you focus each person's development on their specific growth edges.

For example, if a simulation showed that someone has excellent communication skills but struggles with prioritization frameworks, you can front-load their onboarding with training on priority matrices, time management systems, and decision frameworks. You're not wasting their time on communication training they don't need.

This personalized approach accelerates time-to-productivity dramatically. New hires aren't spinning wheels on development that doesn't matter for them. They're focused exactly where they need growth.

Ongoing Coaching for Continuous Improvement

The best recruitment teams don't stop at personalized onboarding. They establish ongoing coaching rhythms that continuously develop the capabilities first assessed during simulations.

Biweekly coaching sessions can track progress against initial simulation baselines. How has prioritization improved since week one? Is the executive assistant now handling competing demands more fluidly? Regular check-ins with reference back to simulation performance create clear development arcs.

Organizations using this approach report measurable improvements. Quality assurance scores increase by 50-60% within the first 90 days. Training completion rates exceed industry averages by two to three times. These aren't accidents—they're the result of development plans built on accurate initial assessment.

The retention connection matters too. Employees who receive development support from day one, tailored to their actual needs, show dramatically lower turnover. When people feel invested in and see themselves improving, they stay. The simulation-to-coaching pipeline transforms hiring from a one-time transaction into a long-term development relationship.

The Future of Job Simulations: AI and Emerging Trends

Job simulation adoption is accelerating, driven by technology advances and fundamental shifts in how organizations think about talent.

AI-Powered Simulation Tools

Artificial intelligence is making simulations more scalable and sophisticated. Platforms now use natural language processing to analyze candidate communication patterns during text-based simulations. AI can score certain objective criteria automatically—like whether a bookkeeper categorized transactions correctly—freeing human evaluators to focus on judgment-based assessment.

Some tools even adapt simulation difficulty in real-time based on candidate performance, similar to how standardized tests adjust question difficulty. This adaptive approach provides more precise skill measurement across a wider range of ability levels.

However, technology should augment human judgment, not replace it. The nuances of emotional intelligence, creative problem-solving, and cultural alignment still require human evaluation. The sweet spot is using AI for objective, rules-based scoring while retaining human assessors for subjective, context-dependent criteria.

2025-2026 Hiring Trends Driving Adoption

Several macro trends are accelerating the shift toward simulation-based hiring.

Skills-based hiring adoption hit 85% in 2025, up from 81% just one year earlier. This momentum continues building as more organizations recognize that credentials don't predict performance. Industry projections suggest adoption will reach 92% or higher by 2027.

Legislative and regulatory pressure around bias-resistant hiring methods is increasing. As governments scrutinize hiring practices more closely, simulations offer demonstrable fairness through objective, standardized assessment. Organizations can document that every candidate faced identical scenarios and evaluation criteria.

Remote work permanence has made virtual simulations standard practice. When you're hiring someone who'll work remotely anyway, assessing them through a virtual simulation creates perfect alignment between assessment method and work reality.

Finally, economic uncertainty makes "hire right the first time" a strategic imperative. When budgets are tight and hiring mistakes are costly, the 51-65% predictive validity of simulations becomes irresistible compared to the 38% accuracy of traditional approaches.

The trajectory is clear: job simulations are moving from cutting-edge practice to standard operating procedure for organizations serious about hiring quality.

Frequently Asked Questions

What is a job simulation interview?

A job simulation interview is a pre-employment assessment where candidates perform actual work tasks under realistic conditions. Unlike traditional interviews that ask about past experience or hypothetical scenarios, simulations require candidates to demonstrate skills through practical exercises like managing an inbox, handling a customer complaint, or processing transactions. This approach predicts job performance with 51-65% accuracy when combined with structured interviews and cognitive assessments.

How long should a job simulation assessment take?

Most effective job simulations run 15-20 minutes. This duration provides enough time to observe meaningful performance patterns without exhausting candidates or consuming excessive hiring resources. More complex roles might extend to 30 minutes, but research shows diminishing returns beyond 25 minutes. The key is condensing the most critical job tasks into a focused, time-bound assessment that mirrors real work pressures.

Are job simulations better than traditional interviews?

Job simulations aren't necessarily "better" than traditional interviews—they're complementary. Simulations excel at assessing observable skills and demonstrating actual capability, achieving much higher predictive validity than unstructured conversations. However, traditional interviews remain valuable for evaluating culture fit, communication style, and building candidate relationships. The best hiring processes combine job simulations, structured behavioral interviews, and cognitive assessments to achieve maximum predictive accuracy.

What industries use job simulation interviews most?

Healthcare, customer service, hospitality, tech startups, and administrative roles lead in simulation adoption. Any industry with clearly defined, observable tasks benefits from this approach. Healthcare uses compliance scenarios, tech companies assess software proficiency, hospitality evaluates service recovery skills, and property management tests coordination capabilities. The versatility means simulations work across virtually any sector—the key is identifying which job tasks can be realistically replicated in a brief assessment window.

How do you create a job simulation for hiring?

Start with job task analysis to identify the 5-7 most critical daily responsibilities. Design realistic scenarios that mirror those tasks within 15-20 minutes. Develop objective scoring rubrics with 1-5 scales defining what excellent versus poor performance looks like. Pilot test with current employees to validate that your simulation distinguishes strong performers from average ones. Train assessors for consistent evaluation, then integrate simulation results with structured interviews for comprehensive assessment.

Conclusion

Job simulations represent one of the most significant advances in hiring accuracy over the past century of recruitment research. By shifting from "tell me about your experience" to "show me what you can do," organizations achieve predictive validity rates that nearly double traditional interviewing approaches.

The ten examples across industries prove this methodology isn't limited to specific sectors or role types. Whether you're hiring for property management, healthcare, hospitality, or tech startups, the underlying principle remains powerful: observed performance predicts future success far better than claimed experience.

Implementation doesn't require massive resources or complex technology. Start small by piloting one simulation for your highest-impact role. Use the seven-step framework to design your scenario, build objective rubrics, and validate through testing. Over time, expand simulations across your hiring process as you see the quality improvement in your new hires.

At Pathfinder Talent Solutions, we've built our entire recruitment methodology around this research-backed approach. We combine Schmidt-Hunter validated simulations with cognitive assessments and structured interviews to achieve hiring accuracy that's three to five times greater than traditional methods. But we don't stop at placement. The insights from our simulations directly inform personalized development plans and ongoing biweekly coaching that turns initial capability into sustained excellence.

Ready to transform your hiring process? Schedule a consultation to learn how structured hiring methodologies can dramatically improve your talent outcomes. Because the best predictor of future performance isn't where someone worked—it's how they actually perform.